TIL - Kubernetes

May 08, 2020

What follows are notes from a class. Incomplete thoughts & code that probably will only make sense to me. Given enough time, even that may be a stretch! Reference at your own risk 😂

Slides

Resources

- Kubernetes Docs

- Official Getting Started Tutorials

- The Illustrated Children’s Guide to Kubernetes (YouTube, 8 min)

- Kubernetes By Example (“a hands-on introduction to Kubernetes”)

kubectlCheat SheetkubectlDocs- Jobs & Cronjobs in Kubernetes Cluster (YouTube, 37 min)

How To Run Kubernetes (K8)

- Runs on cloud providers

- Runs locally:

kubectl is the Kubernetes command line interface. It has a GUI you can open on a local proxy. It tells you the kubectl commands as you go in the GUI, so you can learn. To create it as a service:

$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta8/aio/deploy/recommended.yaml |

Then it can be opened in a browser at http://localhost:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/

Local Docker Registry

If images are public on Docker Hub it’s fine to skip this step, otherwise create a local registry in your docker-compose file…

#docker-compose.yml |

Start it:

docker-compose up -d |

Test it:

$ curl -ik 127.0.0.1:6000/v2/_catalog # shows all images |

Note: you have to use the IP address instead of localhost to ensure an ipv4 address ¯\_(ツ)_/¯

Push images to the local registry:

$ docker build -t localhost:6000/portcheck:0.0.1 . |

Access Local Registry from K8

Here are the docs on how to do this. It’s a bit complicated because Kubernetes will need the Docker daemon secret in order to pull from the registry. So, create registry credentials:

$ sudo kubectl create secret generic regcred --from-file=.dockerconfigjson=/Users/jamessherry/.docker/config.json --type=kubernetes.io/dockerconfigjson |

Note the above must use absolute paths, not ~/.docker/config...

To confirm it worked:

$ kubectl get secret regcred --output=yaml |

Add to K8 configuration files:

imagePullSecrets: |

Kubernetes Basics

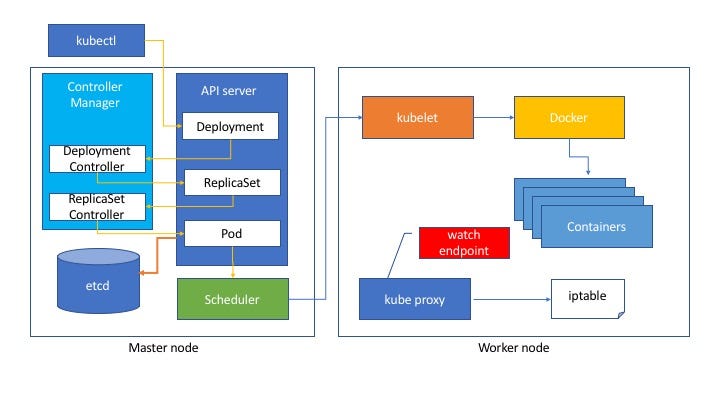

This image comes from Kubernetes in three diagrams which goes into more depth but here is the basic hierarchy:

- Application, db server, etc.

- Container (Docker)

- Pod

- Nodes

- Replica Set

- Deployment

- Service (exposure of a deployment)

To explain….

1is a container for0,2is a container for1, and so on.- A pod is also referred to as a “Kubernetes object”…it’s an environment for containers

- Different pods do different things (app server, db, etc.)

- When you kill a pod you can’t bring it back

More info on all of this is in the three diagrams post above, and in the class slides.

A deployment is the magic of K8. You configure your “desired state”, and the deployment controller attempts to achieve that state on its own by switching out old containers/pods/replica sets for new ones, with the aim of reaching that desired state. This makes it possible to do rolling updates, scaling, auto-scaling, rollbacks, etc. with zero downtime.

For the most part K8 spins things up and down in no order…it does what it needs to do to achieve the desired state. But if you have something like interdependent database servers that need to be spun up in order, a StatefulSet creates the pods in order for example if you need to create databases reliant on each other.

Secrets

Don’t use yaml for secrets. Here are some best practices for working with secrets (auth keys, API credentials, etc.).

etcd = the secret store

Examples of creating secrets:

$ kubectl create secret generic [name] --from-literal=key=value |

Random facts that are useful in the context of different slides

Persistent volume claim is what you need to access the persistent volume. It can limit how much of the persistent volume you have permission to use. Usually issued by a K8 manager.

A label can be referred to across the cluster. name:id // label:class // K8 config:html.

When you create a deployment it creates the pod, replica set, nodes all in one go.

After create you port-forward to make the contents accessible from the outside container world

There are options for health checking…for example, if failureThreshold is met, K8 will just trash the container and make a new one

Demo Deploying a Basic HTTP Server

Create deployment:

$ kubectl create deployment nginx --image=nginx # pulling from Docker Hub |

Expose Service:

$ kubectl port-forward [podname] 8080:80 |

Test that it worked:

- You can ping the ports (8080)

- You can attach to the pod: kubectl exec -it [podname] – /bin/bash

- You can get info on pods from the dashboard:

$ kubectl proxy - You can use get descriptions (same for services, deployments, etc.):

$ kubectl get pods # (short detail)

$ kubectl describe pods # (full detail) - You can see logs:

kubectl logs [--follow] <entity>

Be careful of mistakes on cloud servers…if you accidentally create a loop of endless creating/destroying you’re getting charged for each occurence. Read Managing Resources for Containers. Set resource limits to the limits of your ec2 instance for example.

All Kubernetes Instructions Are Made in YAML

$ kubectl create -f <something>.yaml # create some structure (pod, deployment, etc.) |

Here’s a demo deployment config file:

apiVersion: apps/v1 |

Rollouts & Scaling Commands

$ kubectl rollout undo deployment.v1.apps/basic-server |

If you want K8 to store more than the default 10 historical replica sets you can change it in the yaml with spec.revisionHistoryLimit.